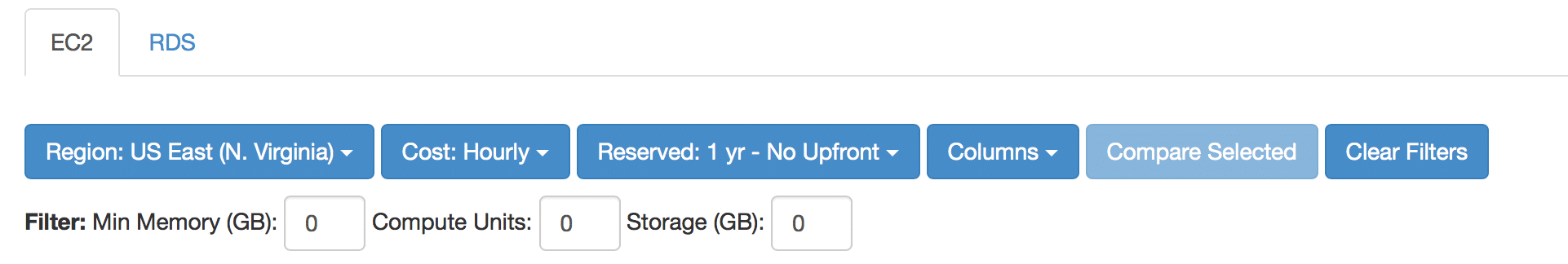

EC2Instances.info – A Handy Interactive Guide to AWS EC2 Instance Sizing and Pricing

One of the most challenging aspects of the AWS ecosystem is navigating the pricing and sizing options when looking at EC2 instances. Luckily, there is a rather nifty tool out there which has been created by a community member and hosted on GitHub which you can find at http://ec2Instances.info The ec2Instances.info site lets you dig … Read more