Raise your hands if you started the virtualization journey using oversized virtual machines <raises hand>. Let’s face it, we are in the process of making the same mistakes in the cloud that we did with early virtualization. It’s ok.

As many of the world’s leading open source and closed source traditional vendors lean forward to embrace the cloud, we can see that someone is not going to get the memo.

“Yeah if you could just go ahead and refactor everything on Docker and use microservices, that would be great.” Bill Lumbergh

The Infrastructure Over Apps Mistake

Why are we using the cloud? There are numerous reasons that drove us to use the public cloud as a potential solution. They include:

- Changing from capital to operational expense model

- Utilizing self-service capabilities and APIs

- Leverage scale-out capabilities to distribute applications

- Reduce costs of infrastructure using the commoditized cloud pricing

Well, we have the first two covered out of the gate. The challenge is that we get to number three and we hit the first hurdle. A number of organizations who are looking towards the cloud as a new place to host infrastructure are finding out that their staff are simply re-platforming the VM from their current hypervisor to the cloud.

The problem that has been discovered is that the lift-and-shift methodology has become the more common one for folks to test out the cloud. The impact is that it gives a skewed result which will most likely look like a failed implementation. The implementation is failed, but not from the infrastructure perspective. It was the choice to switch the infrastructure without thinking in the context of the application.

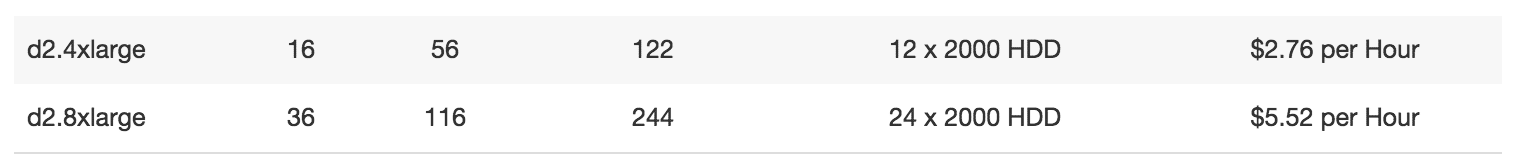

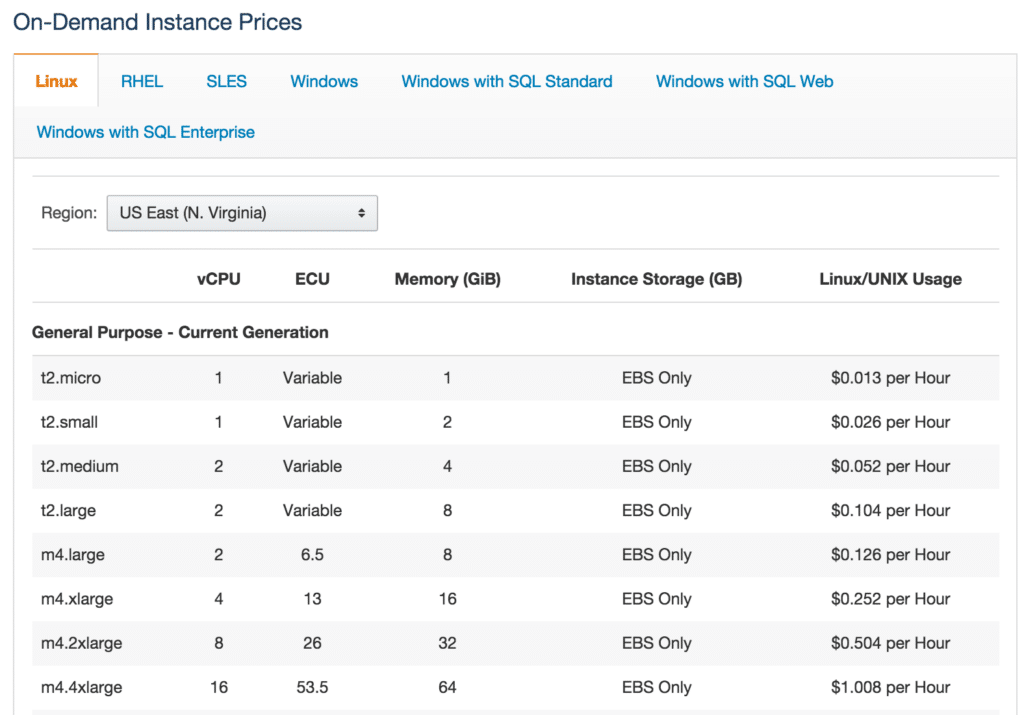

So, when these organizations who wanted to adopt the scale-out infrastructure attempt their early migrations, they will also hit a nasty surprise with point number four. The commoditized pricing that is available in the cloud quickly turns to sticker shock if workloads are sized like traditional virtual machines.

Only You Can Stop Forest Fires…and V2C

The practice of V2C (Virtual to Cloud) is only good as you deploy very small instances. Even then, my recommendation is to always take a build-from-source approach and ensure that the only thing traversing the environments is data as you backup and restore to the various locations where data is required.

Having done the V2C testing myself, I can speak from experience that it is a terrible approach. Remember that cloud installations should be optimized for the thinnest possible underlying OS layer, reduced services, reduced I/O and also the smallest potential footprint to allow selecting small flavors for your cloud instances.

That 4 vCPU machine with 16 GB of RAM that ran in your vSphere environment without much issue is now a going to run you a healthy 180$ per month. That’s one of the reasons that AWS shows all of their pricing in hours. By illustrating pricing by the hour (0.252$/hour) it looks much more attractive. Hourly pricing is very effective when you are quiescing instances during lulls in utilization, but let’s be honest about how often that happens…not often.

The Cloud-Native Computing Foundation may need to have another twin group created to tackle how to deal with monolithic cloud deployments. It’s happening already. The hope is that as organizations practice more with cloud infrastructure that they realize all of the benefits to what cloud can offer by adapting their deployments to suit the newly adaptive and agile infrastructure.