Quick Demo of Tags and Grouping for Automation Polices in Turbonomic 6.1

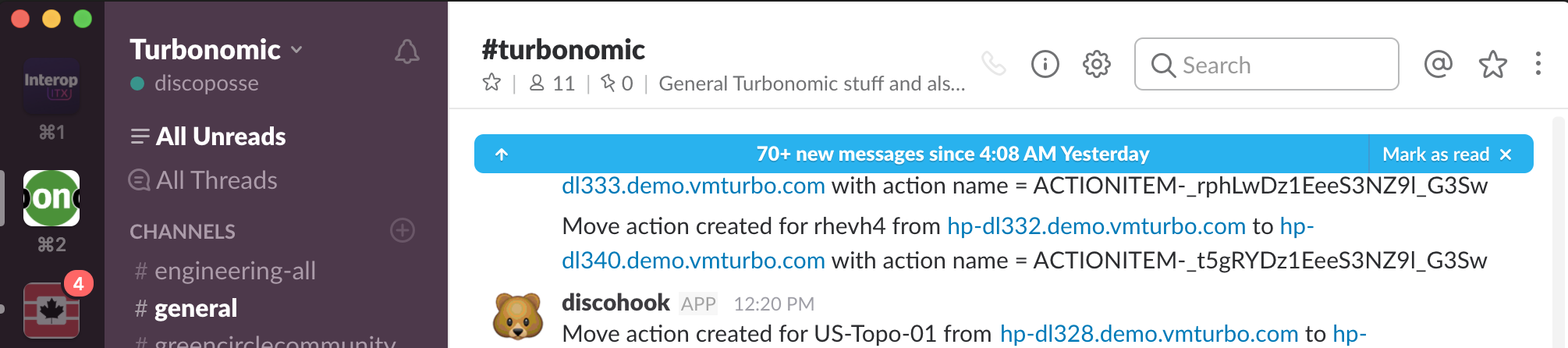

Tagging is a phenomenal way to identify your workloads. This means VMs, containers, cloud instances, and pretty much every single layer of the application and virtualization/cloud stack. I’m often asked how tagging comes into play with Turbonomic, so here is a really quick demo of how tagging is used to be able to do things … Read more